Note on Transparency: This article was generated with the assistance of Artificial Intelligence to provide a comprehensive and up-to-date overview of the discussed topic.

From Prompts to Context: Mastering Context Engineering for Autonomous AI Agents in 2026

Introduction: Why Prompting is Evolving

In early 2025, the industry was obsessed with "writing the perfect prompt." However, as we move through 2026, the focus has shifted. We are now in the era of Agentic AI—systems that don't just chat, but execute tasks autonomously.

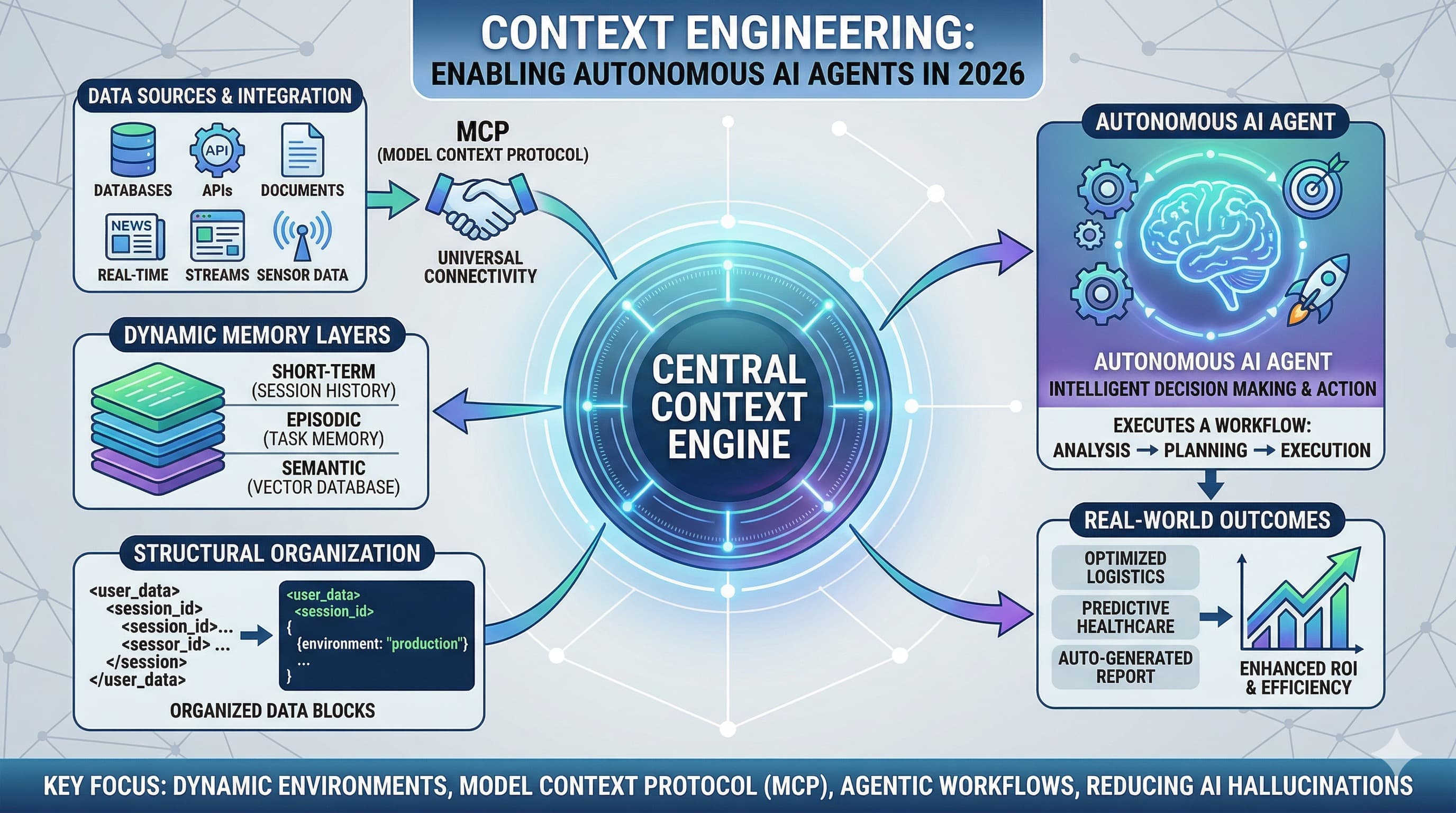

The secret to making these agents reliable isn't a better adjective; it’s Context Engineering. While traditional prompting provides a static instruction, Context Engineering builds a dynamic ecosystem that allows an AI to understand history, access live tools, and maintain "state" across complex workflows.

The Foundation: Bridging Markdown and Context

Before mastering the high-level architecture of context, it is essential to understand how to structure the information the AI receives. Proper formatting is the first step in reducing "model confusion."

Pro Tip: If you haven't yet mastered how to use syntax to guide AI behavior, check out my deep dive on Mastering Markdown Prompting. Using clear Markdown headers and blocks is the prerequisite for the advanced Context Engineering techniques discussed below.

The 2026 Jargon Buster

To lead in this field, you must understand these three pillars of the modern AI stack:

- Context Engineering: The architectural design of the "data environment" fed to an LLM, ensuring it has only the most relevant information at any given millisecond.

- Model Context Protocol (MCP): The universal industry standard that allows AI agents to securely connect to local and remote data sources like Google Drive, Slack, or GitHub.

- Agentic RAG (Retrieval-Augmented Generation): An advanced form of RAG where the AI decides what information to search for, rather than having a fixed set of documents pushed to it.

Core Pillars of a Context-First Strategy

1. Structured Context & Semantic Tagging

Modern LLMs process information significantly better when it is structured. Instead of a wall of text, context engineers use distinct blocks like <user_profile>, <session_history>, and <tool_definitions>. This allows the model to "attend" to specific variables without losing the thread in "context fog."

2. Dynamic Memory Layers

In 2026, we no longer feed the entire conversation history into the model. We use a tiered approach:

- Short-term Memory: The immediate last 5–10 interactions for local coherence.

- Episodic Memory: Summarized versions of previous "episodes" or tasks to track long-term goals.

- Semantic Memory: Facts stored in a Vector Database (like Pinecone or Milvus) that the agent pulls only when needed.

Context Engineering vs. Prompt Engineering: The 2026 Shift

| Feature | Prompt Engineering (Traditional) | Context Engineering (Current Standard) |

|---|---|---|

| Focus | Wording and phrasing of the query. | Managing the flow of data to the model. |

| State | Stateless (single-turn interactions). | Stateful (remembers goals across days). |

| Data Source | Static text within the prompt window. | Dynamic API calls via MCP and live tools. |

| Outcome | A single high-quality answer. | A completed end-to-end business process. |

Real-World Application: The "Autonomous Analyst"

Imagine an AI Financial Analyst.

- The Old Way: You manually paste a CSV and ask for a summary.

- The 2026 Way: The agent has Context Access to your live ERP system. It notices a dip in revenue, autonomously retrieves the last three months of marketing spend, queries your competitive intelligence graph, and presents a root-cause analysis before you even start your workday.

Common Pitfalls to Avoid

- Context Poisoning: Feeding the agent too much irrelevant data (noise), which leads to "hallucinations" and skyrocketing token costs.

- Lack of Guardrails: Giving an autonomous agent context without Bounded Autonomy—always set limits on what the agent can execute (e.g., spending limits or delete permissions).

- Token Efficiency: Even with 2M+ token windows, "filling the window" still increases latency. Use Context Pruning to keep your agent's responses lightning-fast.

Conclusion & Your Next Steps

Mastering Context Engineering is the single best way to future-proof your career in the AI economy. To start building:

- Explore MCP: Research how models like Gemini use the Model Context Protocol to connect to your local files.

- Refine Your Structure: Go back to the basics of Markdown Prompting to ensure your data blocks are clean.

- Build a Loop: Experiment with agent frameworks like LangGraph or CrewAI to see how state is managed in the real world.

If you found this guide helpful, stay tuned to the sudshekhar.com blog for more insights into the 2026 AI landscape.

Guardrails vs. Input Sanitization: The Ultimate Defense Strategy for LLMs

Introduction to Large Reasoning Models

What is LLM Benchmarking? An Essential Guide to Evaluating Large Language Models

5 Game-Changing Vector Database Use Cases You Need to Know

What is a Vector Database? Your Essential Guide to AI's New Memory

Unlocking the Power of Retrieval-Augmented Generation

Introduction to Embedding and Embedding Models in AI

Unlocking the Power of Large Multimodal Models in AI

Understanding Model Context Protocol (MCP)

Mastering Markdown Prompting ✨